Before anyone else says it, yes, I know the title over-promises. Snappy comebacks aren’t exactly my thing, because they are typically simple-minded and reductionist responses to issues that are, to borrow Dr. Ben Goldacre’s line, “more complicated than that”.

Category: Skepticism

Scientific scepticism (or, skepticism) is an attitude towards scientific enquiry, rather than paranoia, cynicism, or conspiratorial thinking.

As anyone who reads Synapses would know, I’m a proponent of scientific skepticism. What that means is something along the lines of “the practice or project of studying paranormal and pseudoscientific claims through the lens of science and critical scholarship, and then sharing the results with the public“ (from Daniel Loxton’s 2013 essay, “Why is there a skeptical movement?).

Skepticism should not be conflated with hyperskepticism, as Caleb Lack and I argue in our book on critical thinking. So, it doesn’t (necessarily) mean believing in chemtrails, or Big Pharma deliberately poisoning you, or your cellphone making you infertile (it’s a bit of a pity that that one isn’t true, actually).

This post isn’t about “fake news“, a term which has gone from useful to meaningless in a record time. It’s about nonsense published as if it’s news, and about the willingness on the part of some publications (okay, one in this case) to take a published piece of nonsense and then make it even worse in order to get you to visit their website.

Late last year, I had the pleasure of meeting outgoing IFT President, Colin Dennis, at a talk I gave at the 2015 SAAFoST conference in Durban. For those of you who are interested in food science and nutrition, and who don’t know of SAAFoST, I’d like to point you in the direction of their “Food Facts Advisory Service“, where you can find a number of informative pieces on food facts and fears.

In any event, Prof. Dennis suggested that he would be keen on inviting me to speak at a future IFT event. To my great surprise and pleasure, that invitation ended up being to give one of the keynote talks – alongside such luminaries as Dr Ben Goldacre – at the IFT congress in Chicago, which has just concluded today.

Slate recently published an interesting piece on “scientism”, which both perpetuates a caricature of science and rationality, and also points to a genuine problem with some folk who can’t see beyond science and reason as tools for addressing our political and social dilemmas.

“Scientism” is the belief that all we need to solve the world’s problems is, you guessed it, science. People sometimes sub in the phrase rational thinking, but it amounts to the same thing. If only people would drop religion and all their other prejudices, we could use logic to fix everything.

Last week, Neil deGrasse Tyson offered up the perfect example of scientism when he proposed a country of #Rationalia, in which “all policy shall be based on the weight of evidence.”

Tyson is a very smart man, but this is a very stupid tweet, and a very stupid idea. It is even, we might say, unreasonable and without sufficient evidence. Of course imagining a society in which all actors behave logically sounds appealing. But employing logic to consider the concept reveals that there could be no such thing.

There are two very different things being described here. To base all policy on the weight of evidence is a fundamentally different thing to desiring a society in which all actors are Mr. Spocks, all logic and no emotion. In conflating them, Jeffrey Guhin sets up the caricature I mentioned at the top.

Many pro-science and rationality folks, including myself and the vast majority of scientific skeptics I’ve been hanging out with at conferences for the last decade, would agree with Tyson’s tweet while rejecting the implication that it means we need to be all logic, all the time.

The reason for this is simple, and points to Guhin’s misunderstanding of the broader – and social – context to scientific reasoning. Emotions, intuitions, aesthetic preferences and what have you are all things that make us human, and social creatures, yes – but they are also data points in themselves.

In other words, policy that is based on the “weight of evidence” does not need to be blind to these human details, and is in fact compromised if it’s not sensitive to these details. We care, for example, about compliance with the law, so it would be irrational to enact a law that caused such a negative (emotional) reaction that compliance was impossible.

We also care about happiness, meaning that it would be irrational to treat each other solely as logical agents. Doing so would make marriages and friendships unbearable, and parent-child relations dysfunctional. Our relationships admit to – and even cherish – the idiosyncrasies of our various subjective points of view.

Guhin does go on to make various good points about scientific overconfidence, reminding us that “experts often get it wrong”. But this is again to rely on a misunderstanding of science – good science knows that it’s fallible, and this is in fact one of its key virtues when compared with pseudoscience.

The fact that scientific reasoning leads to errors (he mentions scientific racism, phrenology, eugenics and other examples) is only a crippling problem for science if there’s some better alternative for resolving empirical problems. Until we have one, the point is that science is self-correcting, and that we can discover and discard our mistakes.

More critically, the scientific method is of course vulnerable to exploitation by those who want to use it for odious reasons – but isn’t dogma (for example religious fundamentalism) more so? And, to note that people use the language of science to prop up eugenics doesn’t entail that they used good scientific reasoning to do so. Instead, we can now recognise it as an abuse of the scientific method, rather than a proper application of it.

Guhin has harsh words for Tyson, for the “I f***king love science crew”, and for Dawkins, as I also previously have for all three cases. But the problem with these evangelists for science is largely a political one, rather than them being wrong on the principle of the matter. If you communicate with others in ways which make it sound like you think they are stupid (Dawkins), people aren’t going to listen. If you communicate in ways which make it sound like you are stupid, for example in dismissing the value of philosophy (Tyson), you’ll also lose part of your audience. And if you just share (sometimes incorrect) memes on a Facebook group, nobody is going to believe you actually love science at all.

Guhin is right to remind us to not appear as evangelical as those we sometimes find ourselves arguing with. I’ve been watching folks on Twitter making the tone-deaf “the evidence must decide” sort of arguments with regard to various arguments about race and economics in South Africa, and they don’t get that even if that’s true, social progress also requires not being an ass.

But to conflate “rational thinking” with “scientism”, as Guhin does, is a mistake. Rational thinking can incorporate both following the evidence, and doing so in a way that is aware of one’s own biases, and all of our emotions, needs, wants and fears.

Those are all evidence too, yes. But – crucially – one of the ways we can get along better is knowing when to point that out, versus knowing when to take off our “Mr Logic” caps, and simply relate to each other as human beings.

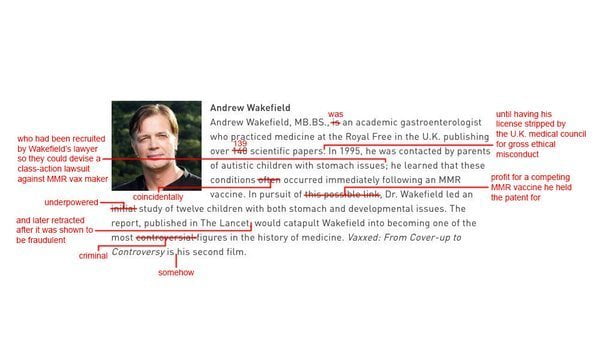

Update: De Niro has now pulled the film from Tribeca

In a statement posted to the Tribeca Film Festival’s Facebook page, Robert De Niro has defended the screening of Andrew Wakefield’s anti-vaccine conspiracy theory movie VAXXED, saying:

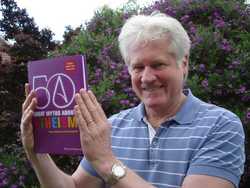

Monday 28 March sees the release of Critical Thinking, Science, & Pseudoscience: Why You Can’t Trust Your Brain, co-written by Caleb Lack and me. Caleb is an Associate Professor of Psychology at the University of Central Oklahoma, who I met at TAM 2013 in Las Vegas.

As Caleb notes in his announcement of the book (where much of the text below is copied and pasted from),

it is based largely around the critical thinking courses that Jacques and I have been teaching at our respective universities. The book is designed to teach the reader how to separate sense from nonsense, how to think critically about claims both large and small, and how to be a better consumer of information in general.

Although it’s being mostly advertised towards the academic market, we have purposefully written it to be highly readable, entertaining, and great for anyone wanting to sharpen (or build from scratch) their critical faculties.

South African readers will be alarmed at the price of the book, which is a factor of exactly the point Caleb makes above – that it’s been pitched at the textbook market by the publisher. We are hoping to arrange a local distributor, which should bring the price down substantially.

And if you’re planning to attend the Franschhoek Literary Festival this year, I’ll be in conversation with John Maytham about the book and its themes on Sunday, May 15 at 10am.

The early reviews we received are gratifyingly positive. Michael Shermer (Publisher of Skeptic magazine, monthly columnist for Scientific American, and Presidential Fellow at Chapman University) said:

This is the best collection of ideas on critical thinking and skepticism between two covers ever published.

Lack and Rousseau have put together the ideal textbook for students who need to learn how to think, which is to say every student in America.

I plan to assign the book to my Skepticism 101 course at Chapman University and recommend that every professor teaching critical thinking courses at all colleges and universities do the same. Well written, comprehensive, and engaging. Bravo!

Elizabeth Loftus, past president of the Association for Psychological Science, Distinguished Professor at the University of California – Irvine, and one of the foremost memory researchers in the world, wrote:

Elizabeth Loftus, past president of the Association for Psychological Science, Distinguished Professor at the University of California – Irvine, and one of the foremost memory researchers in the world, wrote:

What’s wrong with believing in pseudoscientific claims and why do so many people do it? Lack & Rousseau take us on a fascinating excursion into these questions and convincingly show us how junk science harms our wallets and our health.

Importantly, they teach us tips for spotting true claims and false ones, good arguments and bad ones. They raise awareness about our “mental furniture” – a valuable contribution to any reader who cares about truth.

Carol Tavris is a social psychologist, and Fellow of the American Psychological Association, the Association for Psychological Science and the Committee for Skeptical Inquiry. She is the coauthor of the textbook Psychology and the (highly recommended) trade book Mistakes Were Made (But Not By Me). After reading our book she wrote that:

Carol Tavris is a social psychologist, and Fellow of the American Psychological Association, the Association for Psychological Science and the Committee for Skeptical Inquiry. She is the coauthor of the textbook Psychology and the (highly recommended) trade book Mistakes Were Made (But Not By Me). After reading our book she wrote that:

Teachers and students will find this comprehensive, well-written textbook to be a helpful resource that illuminates the principles and applications of critical thinking–a skill that is crucial in our world of bombast, hype, and misinformation.

Finally, we have Russell Blackford, noted (and prolific) author (most recently of The Mystery of Moral Authority and Humanity Enhanced) and philosopher.

Finally, we have Russell Blackford, noted (and prolific) author (most recently of The Mystery of Moral Authority and Humanity Enhanced) and philosopher.

He’s a Conjoint Lecturer in the School of Humanities and Social Science at the University of Newcastle, a Fellow of the Institute for Ethics and Emerging Technologies, editor-in-chief of The Journal of Evolution and Technology, a regular op.ed. columnist with Free Inquiry, and a Laureate of the International Academy of Humanism.

An entertaining introduction to clear thinking, science, and the lure of pseudoscience. Lack and Rousseau clearly explain the principles of logical reasoning, together with the human tendencies that all-too-often undermine it. They show how easily motivated reasoning can prevail over clarity and logic; better, they offer tools to think more critically, whether in science, policy, or our everyday choices.

For those instructors interested in using this in their class, we have also constructed full lecture slides for the book and an instructor’s guide with sample assignments, recommended videos, and more. Feel free to let our publishers know if you’d like to be considered for an adoption copy.

My title is intentionally misleading, as there are aspects of both the cases mentioned therein that are not a free speech issue at all.

As I pointed out in my previous post on the Gareth Cliff saga, M-Net are, to my mind, perfectly entitled to promote a certain brand image, and this entitlement is compatible with saying that Cliff doesn’t fit that image, and that they are therefore not renewing his contract.

There are at least three clear ways in which pseudoscience or bad science can harm consumers. The first, and most troubling, is that you might come to harm through consuming something that causes effects other than those promised or expected. This harm can be direct, as when herbal preparations result in allergic reactions (for example tea tree oil), or with unexpected drug interactions (thanks to “natural” remedies being regarded as different and non-interacting with conventional medicine).

Or, the harm can be indirect, as is the case with vaccine denialism, which not only exposes the denialist to avoidable risks of serious disease, but also impacts on “herd immunity”, thus threatening his or her entire community. Another form of indirect harm results from not seeking effective treatment, thanks to the false belief that you’ve already found a treatment. Penelope Dingle died of a potentially treatable cancer, after years of being “treated” by a homeopath allowed the cancer to spread beyond real medical science’s capacity to treat it.

The second way in which consumers can be harmed is for many of us fairly trivial, and amounts to our simply wasting money on products that can’t deliver on their promises. This is of course not trivial for the poor, or for people who spend significant amounts of money they can’t afford to on quackery – but if you like to pop an occasional homeopathic sleeping aid, it’s not going to cost you anything other than the price of a latte and perhaps some friendly mockery around the dinner table.

The third set of harms is a broad category, including harm to public understanding of the scientific method, where claims need to be held accountable to evidence; and violations of consumer trust, where you expect manufacturers to not only support but also deliver on the claims made regarding their products.

It is because of the risk of these and other harms that the Medicines Control Council (MCC) have introduced a requirement that complementary and alternative medicines (CAM) need to carry a label stating that the product has not been evaluated by the MCC, and that it is not intended to diagnose, treat or cure any disease. The CAM industry has been slow to respond – understandably so, because who would want their product to carry a label telling you it’s mere placebo?

Another sort of reaction to this risk, and one we as consumers should be grateful for, is the large community of scientific skeptics all over the world who take the time to document pseudoscientific or misleading claims, and then follow up with complaints to advertising regulators and other relevant bodies when they encounter such claims being made. In South Africa, one such individual is Dr Harris Steinman, a medical doctor who runs the site CamCheck, devoted to exposing misleading and unverifiable claims made on behalf of CAMs.

Manufacturers or promoters of CAM – and, as we shall see, nutritional supplements also – don’t appreciate it when they are criticised for selling something on the basis of unverifiable (or, verifiably false) claims. There are numerous examples of bullying via lawsuit, or threats of lawsuit, both locally and internationally in cases of this nature. To briefly return to the Dingle example mentioned above, bloggers who exposed the homeopath were served with cease and desist letters. Locally, Kevin Charleston was sued for R 350 000 by Solal Technologies, who sell and promote “untested remedies for a range of serious illnesses”.

More recently, Dr Steinman and CamCheck were the subject of a takedown notice from Ultimate Sports Nutrition and their founder Albe Geldenhuys, who alleged that “unlawful comments were posted” and that defamatory “remarks and/or comments” were made by Dr Steinman. CamCheck was subsequently moved offshore.

The claims made in the takedown notice are weakly supported or completely unsupported. In South African law, claims of defamation fail (or, should fail) if the purportedly defamatory comments are true, and in the public interest. CamCheck’s claims are certainly in the public interest, as I outline above with reference to the harms that can accrue as a consequence of pseudoscience or bad science.

The claims made in the takedown notice are weakly supported or completely unsupported. In South African law, claims of defamation fail (or, should fail) if the purportedly defamatory comments are true, and in the public interest. CamCheck’s claims are certainly in the public interest, as I outline above with reference to the harms that can accrue as a consequence of pseudoscience or bad science.

Furthermore, as Dr Steinman exhaustively documents in response to the takedown notice, USN have a track record of adverse findings at Advertising Standards Authority hearings (locally, as well as in the UK) related to making unsubstantiated claims with regard to their products, as well as for simply changing the names of, and then re-introducing to the market, products that have been the subject of such hearings.

If you do a Google search for “usn rapid fat loss” – as a consumer of their products might plausibly do – the third link (for me, but it will be a prominent link for anyone) leads you to their “12 Week Rapid Fat Loss Plan” (pdf), which promotes “Carb Block” – a product the ASA ruled against in 2014. If you sell a product which doesn’t do what it says, are you a “scam artist”, as Steinman alleges? On balance of probabilities, it certainly seems a defensible claim, at the very least, and a claim that Steinman could reasonably believe to be true.

USN don’t want their customers to know when products are untested, or contain ingredients that aren’t capable of doing what USN claim they do. As unfortunate as that might be, the takedown notice was only the start of their efforts to make sure their customers don’t get to hear these things.

USN and Geldenhuys have now launched a defamation suit against Dr Steinman, claiming R 1 Million in damages for each of Geldenhuys and USN itself.

As with the extended, but ultimately futile, attempts by the British Chiropractic Association to silence Dr Simon Singh’s criticisms, this is little more than an attempt at legal bullying – more obviously so in light of the ludicrous quantum of damages sought.

Some readers might be familiar with the “Streisand Effect”, named after Barbra Streisand’s 2003 efforts to suppress photographs of her Malibu home ended up simply drawing additional attention to those photographs, thanks to Internet sharing. USN want to keep fair criticism underground, but thanks to this lawsuit, perhaps that criticism will end up more Streisand than silent.

First published by GroundUp. Also read this Groundup piece for further context on the USN lawsuit.

As Libby Nelson recently observed, “There are probably more articles on the internet arguing about trigger warnings on college syllabuses than there are actual trigger warnings on college syllabuses”.

Even if their prevalence at universities is often overstated, they are frequently encountered on blogs, Facebook and other social media as a device for warning people that the ensuing discussion might contain distressing content.

As I’ve argued previously, trigger warnings can serve as a way to infantilise an audience, especially at universities where part of the point is to be exposed to challenging ideas. But they can equally serve a similar role to advisories on films, where an audience is forewarned that they might be exposed to violence, profanity, and so forth.

As I’ve argued previously, trigger warnings can serve as a way to infantilise an audience, especially at universities where part of the point is to be exposed to challenging ideas. But they can equally serve a similar role to advisories on films, where an audience is forewarned that they might be exposed to violence, profanity, and so forth.

We’ve become accustomed to these warnings for films, and I’ve never heard of anyone finding them problematic. In fact, I suspect many more of us would be bemused – whether or not offended – to unwittingly purchase tickets for a movie featuring extreme violence without having been forewarned of this.

So one response to the issue of trigger warnings might be to say, why not include a simple (tw: violence) or somesuch before a discussion or link to an article describing the potentially “triggering” thing?

First, because there’s no consensus among psychologists* that this is the best way to handle the issue, even for people who might potentially be “triggered”. In fact, because “trigger warnings emphasize a victim rather than survivor role for the potential reader”, they “potentially increasing distress in the long-term via reinforcement of avoidance behaviors.”

And second, which is my focus here, they can treat us all as unable or unwilling to deal with stumbling upon content that might be distressing, and I worry that over-sensitivity of this sort might dampen expression of and debate on controversial topics.

Anything is potentially distressing for someone, so it’s difficult to see a logical (as in, necessary) end-point for trigger warnings, where there is some content that would never need a warning. And if everything gets a warning, that’s one less thing we need to think about – we don’t need to try and make certain judgments about who the speaker is and the context of the discussion, because that work has been done for us in advance.

The problems are at least two: what if that work has been done poorly, and we’re warned against things we don’t need protecting from? And second, making those judgements might well be a skill worth exercising and preserving.

Of course I’m aware that it’s easier for me to question the value of trigger warnings (to restate, given that I do think they have value: for me to question whether they are sometimes or often overused). And I’m well aware that much questioning of the value of trigger warnings comes from folk who have a profound insensitivity to the distress suffered by the people who often argue for trigger warnings.

But speaking from a position of relative sympathy for selective and thoughtful use of trigger warnings doesn’t mean I’m not concerned at what appears to be thoughtless use of them. To return to threat of over-sensitivity, mentioned above, we have to be able to tolerate occasional, accidental and/or marginal threats to our comfort, because any other world is practically impossible to arrange.

It would be impossible to arrange for even one person, never mind all of us. So the trigger warning conversation, and sensitivity to it, is a bi-directional negotiation: people who are speaking might need to try and avoid certain surprises (what? when? etc. are questions I’ll leave aside), but people who are listening also need to be as fair as possible in not placing undue responsibility or blame on a speaker.

As I say, I don’t know what we need to be sensitive of, or when. Well, that’s not quite true – I know as well as you do that we socially negotiate rules of conduct with the people we encounter, in a dynamic way. And the trigger warning debate does highlight that the game in question isn’t equitable, in that it privileges those of us who find little, if anything, sufficiently distressing to want to be forewarned of it.

But an equal and opposite overreaction isn’t desirable either. Here’s an example of what I mean, to finish this off. Last night, a friend Tweeted a link to an article in the guardian, headlined “Sudan’s security forces killed, raped and burned civilians alive, says rights group”.

He was criticised for not including a trigger warning, and his protestation that this was a headline that served as its own trigger warning for the article that followed didn’t satisfy the critic.

This is an overreaction first because, if the word rape appearing in a headline is triggering to you, it’s difficult to understand how you can survive on the Internet at all – there seems no way to arrange for an Internet that isn’t triggering in this way, and the requirement that we do so seems unduly onerous.

Second, and on another practical note, a Tweet is limited to 140 characters and at that sort of length, most of us would take in the Tweet at once, rather than parsing each word. In other words, there’s little or no time for a (tw) to do any substantive work in a headline like that – I don’t think it’s reasonable to think that anyone can see (tw) and then the word “rape” a few characters later, where that (tw) has had a chance to cause you to stop reading, or prepare yourself for that word in any way.

At this point, the trigger warning becomes less a thoughtful application of sensitivity to the interests of others, and more a thoughtless application of a disputed protocol. If we want our social justice concerns and interventions to be meaningful, I don’t think it sensible for us to turn them into clichés.

*Disclosure of potential bias, and a little shameless promo: the author of that post is a friend and my co-author of the forthcoming book Critical Thinking, Science, and Pseudoscience: Why We Can’t Trust Our Brains.