Earlier this year, Owen Frisby (the chairperson of SAAFoST) invited me to give a presentation at the 25th Congress of the Nutrition Society of South Africa. While the majority of speakers at the congress were dieticians and others working in medical science, my focus – as in previous posts and columns – was on poor critical reasoning and hyperbole in science writing, and the negative consequences this might have for public understanding of science. If you care to, you can read the text of my presentation below.

I’m speaking to you today not as a scientist, but as a philosopher – mostly focused on philosophy of science and critical thinking – and as a columnist for various publications, most regularly the Daily Maverick.

It’s been said that the philosophy of science is as useful to scientists as ornithology is to birds, so instead of speaking on that topic, I’ll focus on how issues related to health and nutrition are presented to the public, and whether those who do scientific work and try to communicate it to the public are getting their message across. Also, I want to look at whether it’s the right message at all.

There’s no question that, for most of us, health is important. This doesn’t however mean it needs to be treated as a good that always trumps all other goods. It’s entirely possible, and possibly even rational, for an individual to sometimes prioritize goods other than health.

If pursuing such goods comes at a cost to the health of others (for example, second-hand smoke), or at a cost to public welfare in terms of increasing the costs of healthcare for all the rest of us, we can also be excused for wanting to regulate such choices, or to at least disincentivise them in some way.

But one thing that I think is often missed when health is treated as our only or our primary interest is the effect that debates on nutrition, or science more generally, might have on the public’s ability to think critically about evidence and the scientific process.

Our health is a topic that lends itself to over-reaction, panics, and sometimes, the rise of what might appear to be cults, complete with prophets that can lead us from the wilderness of confusion, so long as you trust and obey.

The fact that we mostly do tend to regard our health as a good in itself, and a very important one at that, can lead to our being susceptible to discarding nuanced – and more accurate – understandings of the scientific process and its conclusions in favour of misleading headlines and hyperbole.

So as a starting point, one of the things I hope to persuade you of – seeing as many of you are communicating science in one way or another – is that one of the important lessons healthcare professionals, scientists, and science writers can teach others, including the public and government policy makers, is that things are often uncertain.

We might have very good reason to believe something, yet not feel entitled to claim that we are sure of it. This attitude of epistemic prudence is a reminder and demonstration to laypersons that (in the words of Dara o’ Briain) “science knows it doesn’t know everything – else it would stop”.

The point is, claiming certainty, or adopting a dogmatic stance, not only forecloses debate, but more importantly puts science in the same realm as pseudoscience. Homeopaths confuse the public with unfalsifiable claims, astrologers likewise, and these things waste people’s time, money – and occasionally – lives. It’s the fact that we embrace questions, and doubt, that makes the scientific method superior.

To put it another way, being right often starts with embracing the possibility that you might be wrong.

By contrast, the tone of much popular discourse, including coverage of important scientific fields in newspapers and on social media, proceeds as if things can be known, for certain.

This leads to absurd contestations where things are “proved” and then “disproved” with each new bestseller, and where apparent “authorities” rise and are then quickly forgotten as our attention shifts to the next sensation.

This infantilises the public – not only in treating them as if they are unable to make choices for themselves, but also more literally, in helping to ensure that they can’t make choices for themselves, through misleading them and teaching them to believe in simplified versions of the truth.

Let’s start with a very clear message, as captured in this quotation from the “Bellagio Declaration” of 2013, subtitled “Countering Big Food’s Undermining of Healthy Food Policies”.

The influence of Big Food in preventing public policy initiatives was clearly outlined by Dr Margaret Chan, Director-General of WHO (June 2013):

‘Research has documented these tactics well. They include front groups, lobbies, promises of self-regulation, lawsuits, and industry-funded research that confuses the evidence and keeps the public in doubt. Tactics also include gifts, grants, and contributions to worthy causes that cast these industries as respectable corporate citizens in the eyes of politicians and the public. They include arguments that place the responsibility for harm to health on individuals, and portray government actions as interference in personal liberties and free choice.’

This statement might have come out of a North Korean government press office. It’s infused with hysteria, paranoid thinking, conspiracies, and the evasion of personal responsibility in favour of placing blame elsewhere. And this is why I chose the title I did – big food has turned us into big babies, no longer capable of looking after ourselves.

To very briefly focus on some complex issues that the statement above ignores in favour of promoting paranoia: first, “these tactics” assumes there is a consensus around what the tactics are, and that they are already known to be evil.

“Industry-funded research” is spoken of as if it’s axiomatic that funding has to corrupt, which isn’t necessarily the case. And even if it tends to be, more often than not, the research can still stand or fall on its own merits, and we can’t dismiss it without looking at the evidence.

The “tactic” whereby “they” “cast these industries as respectable corporate citizens” again implies that they cannot possibly be respectable corporate citizens, and also assumes that we are already in agreement as to what a corporation’s responsibilities are in this regard.

Most importantly, consider the way in which personal responsibility is framed: it simply doesn’t exist, in that it’s someone else’s fault that you’re sick and overweight, never yours.

Arguments that “place the responsibility on individuals” are presented as suspect, so instead of reflecting on your own contribution to your problems, you’re encouraged to find a scapegoat – or better yet, let government do it for you, ignoring those idiots who think the state is interfering with “liberties and free choice”.

In summary, conspiratorial thinking is presented matter-of-factly, and thus normalized, in the course of this hyperbolic statement. And given the statement is from an “authority”, we’re primed to grant it our attention and (perhaps) trust.

But for me, this sort of fearmongering indicates that the World Health Organisation is perhaps less concerned with our cognitive health than with other forms of wellbeing. This statement is effectively bullying, rather than persuading the public – and if you teach people that they can’t think for themselves, you shouldn’t be surprised to find that they stop being able to.

And if you’re primed to spot a pattern involving malice and conspiracy, malice and conspiracy there will be. Eli Pariser’s concept of the “filter bubble” articulates this point well – if you go looking for evidence of Bigfoot on a cryptozoology website, you’ll find it. Chances are you’ll end up believing in the Loch Ness monster too, simply because the community creates a self-supporting web of “evidence”.

When these tendencies are expressed in the form of conspiracies, the situation becomes even more absurd, in that being unable to prove your theory to the doubters is taken as confirmatory evidence that the theory is true – the mainstream folk are simply hiding “the truth” so as not to be embarrassed or exposed.

You are all familiar with the language that becomes common in these sorts of situations, but perhaps that familiarity has made us somewhat less vigilant than we should be. Perhaps we should still take note, and be suspicious, when someone replaces argument with summary terms and slogans like big pharma, big ag, GMOs, organic and FairTrade.

These terms serve as triggers for fear or hope – in general, for reinforcing our biases. They describe folk who are doing these things to us, while we helplessly eat what we are told.

I don’t mean to suggest that these labels don’t refer to some real problems – it’s more that the labels take the place of argument, assume both the presence and magnitude of problems, and encourage us to stop thinking.

Thinking is a good in itself – healthcare choices are just one area where we need to make decisions, and rational decisions are only possible if we are thinking things through.

A sound relationship to evidence, reasoning, and the role of authorities in guiding us towards conclusions is a general virtue. Our failure to cultivate such a relationship won’t only impact choices related to health. Becoming lazy in making any category of choices means tolerating sloppy thinking, which is bad for our health in a different sort of way.

From a macro sort of perspective, here’s something that should give us pause for thought. Even though conversations around diet often involve fear, judgement, hyperbole, panic and so forth, consider:

Food isn’t moral. It’s not immoral, either. It’s morally neutral.

What should we then say about so-called “addictive” foodstuffs? The first thing to remember is the point Paracelsus made in the 15th century – “the dose makes the poison”.

While there might be no safe number of cigarettes to smoke, there will be a dosage of carbohydrates, or sugar, that’s unproblematic in all but the most rare cases.

Let’s look more closely at “sugar addiction”, and addiction in general. Two papers are typically cited as evidence for sugar being addictive, at least in the popular media. But what they mostly reveal is that science journalists no longer read or understand the journals, and that the public – and some professionals – are far too trusting when it comes to the sensational headlines that convey elements of those studies to us.

First, the Avena study, published in Neuroscience & Biobehavioral Reviews in 2007:

Food is not ordinarily like a substance of abuse, but intermittent bingeing and deprivation changes that. Based on the observed behavioral and neurochemical similarities between the effects of intermittent sugar access and drugs of abuse, we suggest that sugar, as common as it is, nonetheless meets the criteria for a substance of abuse and may be “addictive” for some individuals when consumed in a “binge-like” manner. This conclusion is reinforced by the changes in limbic system neurochemistry that are similar for the drugs and for sugar.

Pause there – who might be inclined to consume in a “binge-like” fashion? Perhaps someone with a pre-existing impulse control disorder, who happens to latch on to sugar? In other words, the reverse inference from the bingeing to the sugar might get the causal direction entirely back-to-front. We’ll get back to the neurochemistry later, but also, notice the scare-quotes – the author is hedging her bets, with the text only weakly supportive of any claim to sugar addiction, at least if “addiction” is taken to mean what it normally does.

It is not clear from this animal model if intermittent sugar access can result in neglect of social activities as required by the definition of dependency in the DSM-IV-TR (American Psychiatric Association, 2000). Nor is it known whether rats will continue to self-administer sugar despite physical obstacles, such as enduring pain to obtain sugar, as some rats do for cocaine (Deroche-Gamonet et al., 2004).

Nonetheless, the extensive series of experiments revealing similarities between sugar-induced and drug-induced behavior and neurochemistry lends credence to the concept of “sugar addiction”.

In other words (to get to the gist of the first two sentences above), there are some fairly typical features of what we normally understand as addiction that are missing here – but we’ll call it addiction in any case.

As I’ll argue in a moment, our common understanding of addiction is itself flawed, but I pause here just to note that the rats are supposedly “addicted”, but don’t fit the DSM definition of dependency – in other words, we’re using words rather liberally.

One is perhaps reminded of a line from Lewis Carrol’s “Through the looking glass”, where Humpty Dumpty said: “When I use a word, it means just what I choose it to mean—neither more nor less.”

Then, there’s Johnson & Kenny’s paper in Nature Neuroscience (2010), on junk food and addiction (also conducted on rats):

Notably, it is unclear whether deficits in rewards processing are constitutive and precede obesity, or whether excessive consumption of palatable food can drive reward dysfunction and thereby contribute to diet-induced obesity.

As in the Avena study, we don’t know whether an impulse control disorder is simply being expressed – rather than discovered as an effect resulting from the junk food – in this experiment.

Common hedonic mechanisms may underlie obesity and drug addiction.

Yes, if you grow to like something (or find it rewarding), you’ll seek it out. This does not mean the thing is innately addictive. In fact, Hebebrand’s recently published paper in Neuroscience & Biobehavioral Reviews concludes that if anything, “eating addiction” rather than “food addiction” best captures what’s going on when people compulsively over-eat. The food is an expression, not a cause of the impulse control disorder.

Both of these studies use brain imaging to support their conclusions. I worked for five years as part of a multi-disciplinary team investigating disordered gambling, also with the help of fMRI data, and in that time I got to read enough of the literature on fMRI to know just how misleading it can be, especially as presented outside of the lab.

As Sally Satel (who works as a psychiatrist in a methadone clinic) puts it, brain scanning is “a perfect storm of seduction”, promising “great revelations and great objectivity”. More to the point of my presentation today, it offers the possibility of eliminating your responsibility for what’s wrong with you – we can say, “it wasn’t me, it was my brain!”

Many of you will know this image, but before I go on to talk more generally about addiction, here’s an indication of just how misleading fMRI can be:

The short explanation of why this image is interesting is that it neatly summarises why you can’t reach firm conclusions from fMRI data. This fish is in fact dead, yet the scanner showed signs of brain activity.

The short explanation of why this image is interesting is that it neatly summarises why you can’t reach firm conclusions from fMRI data. This fish is in fact dead, yet the scanner showed signs of brain activity.

fMRI data are suggestive, and weakly so at that, in that they reflect neural correlates of various stimuli, but nothing of the perceived and subjective mental responses to those stimuli.

In slightly more detail: Increased blood flow and a boost in oxygen are treated as proxies for increased activation of neurons, and from there we induce to what those neurons are doing. We compare that data to a baseline, and subtract the one from the other, averaging out over the many data points of all participants in a study, with software filtering out background noise and creating these seductive images.

But our experimental conditions are imperfect – think of the difficulties of creating appropriate baseline tests, for one – and large sample sizes cost a lot of money. Add to that the fact that our brains can process the same stimuli in different regions – no one specific area can reliably be said to perform the same task for all of us – and it should be clear that it’s far too soon to reach definitive conclusions from fMRI data.

The philosophical problem is one of reverse inference – we reason backward from neural activation to subjective experience. But if identified brain structures rarely perform single tasks, one-to-one mapping between activation in a region and a mental state is very speculative.

The images that get the attention in the media ignore these complexities. As we know, headlines don’t have space for subtleties, and furthermore, novel and exciting claims get our attention. If your fMRI scans can be said to show that sugar is “more addictive than cocaine”, you’re guaranteed some prime media attention, and who can blame you for trying to capitalize on that?

To quote Satel, we can’t tell – yet – “whether fMRI scans indicate an impulse that is irresistible, or one that simply hasn’t been resisted”. But it’s easier to make choices when you believe that there’s a choice to make, rather than a forced one, such that an “addiction” narrative might support.

To put it another way, diminished expectations of agency can lead to diminished agency – if you’re not aware of your choices, it’s more difficult to make choices.

Furthermore, addiction – and here I mean what we more commonly think of as addiction, like with heroin – retains elements of being a voluntary behaviour. It might be more difficult to make certain choices, in certain circumstances, but it’s still possible, and you’re more in control than you might think.

Yes, we see increased dopaminergic action (limbic/reward system) – where expectation is mediated by something known to be an addictive substance, or a correlate of an addiction. But we can’t conclude from this that all volition is lost, or that the addictive substance has “hijacked the brain”.

When we speak of things “changing the brain” in what Satel refers to as the “brain disease” model, we not only frame our choices as being all-or-nothing (sugar is toxic!), we also overstate the significance of changes to the brain in general.

This is because we’re changing our brains all the time, and we like the fact that we can do so. When you learn to play chess, or learn a language, you’re “changing the brain” too.

And what’s forgotten in this “brain-disease model” is how much we can tweak our behaviour – even our most compulsive behaviour – through using our past experience as a guide to influencing our futures through sanctions, incentives, and adjusting the contingent facts of our day-to-day lives.

Addiction – even of the most acute kind – is a behaviour whose course can be altered by the application of sanctions or incentives. Satel describes the brain level of analysis as subject to neurocentrism – the notion that the brain is inevitably the best level at which to explain behaviour.

The neurocentric position is held to be more authentic, more true, and holding more predictive value. And while some brain disorders – Alzheimer’s, for example – can be interpreted in light of this sort of model, addiction is far more complex.

For addiction, the neurocentric view implies that the solution is always a medical one. And yes, methadone does work (for withdrawal), but can obscure other remedies. Here’s a detail that might surprise you – in the majority of cases, people quit on their own, usually by their early 30’s. But we suffer from a confirmation bias here, in hearing about the cases that don’t quit, because these cases are often dramatic, and tragic.

Addiction is also a remitting condition – and in chronic cases, addicts also tend to have depression, anxiety and other confounders. What this means is that there are various options for intervention, and the psychological and environmental tweaks that might help addicts are frequently overlooked in favour of the brain-level explanation.

Here’s a great example from history. In 1971, there was an epidemic of heroin use in SE Asia among US soldiers. Nixon panicked, and launched Operation Golden Flow. You couldn’t board a plane back to the US unless you tested clear (after having a few weeks to clean up). In July, an estimated 15% of soldiers were using heroin regularly, but two months later, all but 4.5% tested clear. In follow-up studies, 3 years later, only 12% of those who had dependence had re-experienced addiction.

The addicts who are in control of their dependencies also adopt self-binding strategies. One of the books I recommend most strongly for understanding how volition works is George Ainslie’s “Breakdown of Will”, in which he speaks of “Bright Lines” as ways to hack the brain, given that we know in advance which weaknesses we’re susceptible to.

Motivation, in short, can make a huge impact. And the brain level is not YET the level at which our interventions are the most useful. Addiction and impulse control issues are a human drama, occurring in a context.

But the problem is that we like stories, and the media feed that liking. Sensationalist stories gain traction via our confirmation bias, and our cognitive dissonance – not being able to reconcile the complicated version of events with the sensationalist one – results in the backfire effect, where we double-down on our existing beliefs and shut out dissenting views.

What this adds up to is hysteria and moral panics, with little tolerance for nuance.

Worse of all, sometimes people who work in science contribute to our illiteracy by cherry-picking data, by presenting science as settled when it’s actually contested. This might sell books and gain a following, but at the expense of our critical thinking skills.

One clear lesson here is that we have a responsibility to be prudent with advice, and to reinforce the character of scientific enquiry in how we operate.

Set this more complex snapshot of addiction against the version currently gaining traction in South African and international media, and it should be clear that a word like “addiction” is being exploited for political and marketing purposes. The intent might be sound, even noble, but is it necessary to mislead, or can the message get through without the hyperbole?

We need to factor in the cost to our critical thinking abilities, as an at least part offset against the purported health benefits. Physical health does not necessarily trump these other considerations.

We should also be concerned with the costs all of us bear. An establishment in Hout Bay called Harmony Clinic now offers an 8 week online program for R 2 500, as well as a more expensive 28 day inpatient programme, to cure you of your “sugar addiction”.

We should also be concerned with the costs all of us bear. An establishment in Hout Bay called Harmony Clinic now offers an 8 week online program for R 2 500, as well as a more expensive 28 day inpatient programme, to cure you of your “sugar addiction”.

They also run a “Sugar & Carb Addicts Anonymous” group – and the thing that makes this more troubling than simply being a middle-class moral panic is first the amount of breathless media coverage it gets – usually uncritically – but also the fact that the programme has now been recognized by Discovery Health – which means we are all paying for quackery.

To briefly touch on some other examples of how popular culture can be nudged into believing some very controversial claims about health, consider the rise of the idea of “real food”, or the “real meal revolution”, which inserts panic regarding GMOs and soy products into dietary advice that can (and should) itself be the subject of critical interrogation, some of which I’m pleased to see happening at this congress.

Leaving details of any particular diet to one side, I’d want to simply pause and ask “What is real food?” Does golden rice, genetically engineered and holding the promise of saving the 670 000 children who die of Vitamin A deficiency every year count? Or what of the founder of the Green Revolution, Norman Borlaug, a biologist who invented high-yielding wheat that is credited with saving a billion lives?

One sometimes gets the sense that some of these concerns are what folk on social media ironically refer to as “middle-class problems” – for people who are struggling to simply stay alive, you won’t get much sympathy for your GMO-skepticism, or for scaring them with nonsense fears around vaccines and autism.

Here’s another middle-class problem: FairTrade – which is by and large an exploitative cartel. Growers are paid very little for Fair Trade coffee, and consumers pay more for it. But growers sign up for fear of being frozen out, thanks to the marketing clout FairTrade has. So it’s either sell something at a lower profit, or sell nothing – which sounds quite a lot like a protection racket, but good luck convincing a hippie of that.

As London’s SOAS reported in their study of FairTrade:

What did surprise us is how wages are typically lower, and on the whole conditions worse, for workers in areas with Fairtrade organisations than for those in other areas.

Likewise with “organic” food – organic farms are currently 80% as productive as conventional ones, so immediately, we need more space to farm in, and more people willing to be farmers.

Science is also unable to support claims that organic food is safer or healthier. In the meanwhile, who pays the price for our affectations? The less well-resourced farmers, who can’t get by without the pesticides and so forth, because they have to concern themselves with yield above all else.

They also can’t afford the extra staff, and organic farming is more time and labour intensive, results in more spoilage, and at the end of the day, less profit.

The lesson might be: when you’re struck with a noble sentiment, try to ensure it’s not being funded by poor people, who don’t have the luxury of choice.

Also, organically reared cows burp twice as much methane as conventionally reared cattle – and methane is 20 times more powerful a greenhouse gas than CO2 is. So future generations might be a little less than pleased at your organic food fixation.

Also, organically reared cows burp twice as much methane as conventionally reared cattle – and methane is 20 times more powerful a greenhouse gas than CO2 is. So future generations might be a little less than pleased at your organic food fixation.

Again, as with FairTrade, there are various cartels controlling organic certification, in a process that results not only in various confusing standards, but also creates a market for regulatory bodies to interfere with choice.

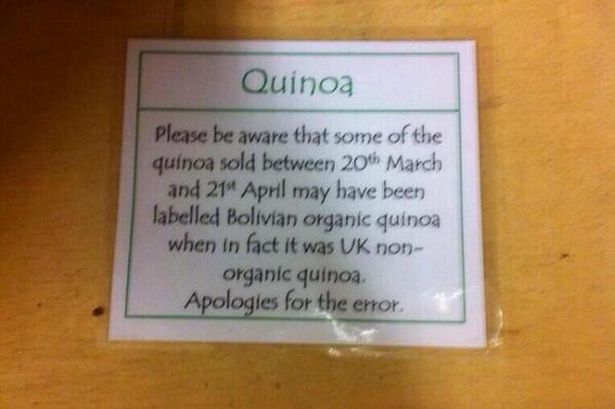

We end up with something like a religion, as in the potential need for separate bread slicers!

It’s rarely true that people can’t think for themselves – if we allow them to. As I hope I successfully conveyed earlier, even addicts have all sorts of lucid periods, figuring out coping strategies for themselves.

It’s rarely true that people can’t think for themselves – if we allow them to. As I hope I successfully conveyed earlier, even addicts have all sorts of lucid periods, figuring out coping strategies for themselves.

Furthermore, the things we’re attracted to – even drugs – serve a purpose in our lives, and we need to understand that in our responses too. We’re inclined towards thinking that one model of health is compulsory for all of us – but self-harm on your definitions does not always need to be pathologised.

The problem with these panics, and with “science” and science reporting being infused with scaremongering, is that the state will turn to paternalism. Some things – that are always unhealthy – deserve serious nudging. But as I mentioned earlier, there is a safe level of sugar, wheat and so forth, and diets shouldn’t be medicalised all the time – it’s food first, rather than medicine. And food should be fun.

When nudges are appropriate, for example through taxes, or increased healthcare premiums for smokers, the most important question is simply whether they work or not – food should not become a moral battleground.

As you know, South Africa is now talking about a sugar tax. There are reasons for pessimism, and for thinking this a moralistic move rather than a pragmatic one. Alchohol taxes here are not very effective, and cigarette taxes haven’t increased the fiscus greatly, thanks to cross-border smuggling.

But even in more coherent economies, results are poor. Bloomberg’s soda tax didn’t work, and created perverse incentives such as people simply buying two sodas. Denmark’s “fat tax” lasted only a year- but in the meanwhile, authorities said the tax had inflated food prices and put Danish jobs at risk. The Danish tax ministry has also said it was cancelling its plans to introduce a tax on sugar.

I’m arguing that we are able to take responsibility for our choices, but have been trained into doubting that, and now need someone to blame for our poor choices. Even if there is more unhealthy food available than ever before, we’re eating more of it than ever before too – and that’s our fault.

On top of that, we’re moving less too, spending all our time in cars, in front of televisions or computer screens, perhaps reading about how the evil food producers are making tasty food.

There’s no reason to think it’s a conspiracy – they are making the food that we ask for. We’ve taken 3 decades to reach our current levels of obesity – and now that we cotton on to the fact that many of us need to learn new habits, and in general simply eat less, why not give that message a little time to sink in, instead of rushing to legislate/medicalise?

A short-term reaction involves panicking, and governments telling us what to do, perhaps gaining some revenue through taxing the things we’re told have made us sick. A longer-term – but more sustainable – solution involves education, teaching people to think critically about evidence, and encouraging them to take responsibility for their choices.

Yes, of course non-communicable diseases are a problem – but we’re still living longer than ever before, which means the story is on the whole positive, rather than catastrophic. This is something that’s easy to forget when only focusing on the panics.

Advertising might well be a contributing factor to buying unhealthy food – but it’s parents who buy the food, not the kids, and parents can understand the risks if they choose to. Meanwhile, we can’t blame companies for making their products attractive – that is, after all, their job. Of course food is engineered for our pleasure. Would we want it any other way?

We have no compelling reason to doubt that the food market – and “unreal” food, whatever that might mean – can’t develop into being healthier. And to some extent, our interests and those of the food producers are aligned – after all, the longer we live, the more product we buy!

Nuance in these debates is sacrificed for hyperbole, and slacktivism replaces activism in the field of education in critical reasoning about science. In the world of social media and short attention spans, we overstate the value of our own experiences, and generalise from them to universal truths.

But a collection of anecdotes does not equal data, but that’s difficult to remember when temperatures run as hot as they do.

The democratization of knowledge via the Internet has brought real boons to society. But it can also make us forget that real scientific breakthroughs happen in journals, not in bestselling cookbooks. And you hear about them on the news, not on the Dr Oz show.

It’s our job to fight for nuance, and to demonstrate, partly through showing that we’re willing to embrace uncertainty, what the value of the scientific method is, and why our best-evidenced conclusions should be preferred to conspiracies and folk wisdom.

And we devalue our scientific currency, or credibility, when we assert certainty – and we do the political cause of science harm, in teaching people that there are easy answers.

Our worth as scientists or science communicators is not vested in conclusions, but in the manner in which we reach conclusions. It’s not about merely being right. Being right – if we are right – is the end product of a process and a method, not an excuse for some sanctimonious hectoring, or dietary evangelism.

Sometimes we need to remind ourselves of what that method looks like, and the steps in that process, to maximize our chances of reaching the correct conclusion. Focusing simply on the conclusions rather than the method can make us forget how often – and how easily – we can get things wrong.

As Oscar Wilde had it, “the truth is rarely pure and never simple”.