To make my biases clear at the outset, I’ve been appalled at how Donald Trump has been fomenting racism, sexism, and political polarisation ever since he ran for office (he was doing so before, but in a less impactful way).

Tag: censorship

As much as being religious can interfere with peoples’ ability to think objectively about moral issues, it’s sometimes the case that antipathy to religion can do so, too.

The former problem arises because people can approach moral problems with unquestionable fundamental rules in mind, given to them by a divine force, and the latter because we should really only care about other people’s values when those values might result in harm, and not simply because we find them silly.

While I would have wanted to submit comment to Independent Media’s panel on what to do about abusive speech on newspaper comment sections, I somehow missed the call for submissions.

While I would have wanted to submit comment to Independent Media’s panel on what to do about abusive speech on newspaper comment sections, I somehow missed the call for submissions.

Now that their report is out, and on the understanding that this is a continuing conversation, I’ve offered a few comments on the panel’s recommendations – and the underlying issues – below.

TheMediaOnline’s summary of the panel’s findings seems comprehensive and accurate enough to simply quote, instead of doing the work of summarising them myself:

- In the interests of freedom of expression, it is desirable to host online comments

- However, the constitutional rights of readers and members of the public should not be infringed by such comments

- It would be preferable to moderate comments prior to their publication online

- Online platforms should be staffed with suitably qualified personnel

- If effective pre-moderation cannot be undertaken for any particular reason, Independent should consider closing its comments section

- Independent Media should develop guidelines to define unacceptable speech, which take into account legal and ethical considerations, but should not amount to censorship of differing viewpoints

I’ll not be addressing those points sequentially, and might not even get around to addressing all of them. But the free speech issue is one that does have to be addressed, if only to emphasis an important distinction.

Free speech

A commitment to free expression makes it desirable, rather than necessary, to host online comments. In other words, even if you shut comments down entirely, you are not violating anyone’s right to free expression.

The right to free expression means that you’re not barred from saying something. It does not mean being required to provide you with the platform on which to say it. So, for as long as you can make your point on your own blog, Facebook, Twitter or wherever, your rights are not being violated.

Your opportunities are being circumscribed, yes, but a private entity like a media house has no legal or moral obligation to provide you with an opportunity to comment. In fact, an overall commitment to free expression might mean exactly barring some people from commenting, in the grounds that they provide a chilling effect on the comments of others.

A practical example: if you wanted to have a comment-section discussion on what it’s like to be black and poor in Cape Town, you’d naturally get fewer people who are black and poor commenting if you also allowed white racists to comment.

More problematically: you might also get fewer black and poor people commenting if you simply allowed rich people to comment, in that the target audience might feel some measure of alienation or of being typecast or misunderstood.

I use these examples not to recommend these sort of constraints on comment spaces, but in furtherance of the general point that specific restrictions on who can comment where might, in certain instances, enhance freedom overall. The point is that freedom of expression in the aggregate can sometimes be served by restricting limited instances of free expression.

Legality vs. tone/character

The right to free expression and the extent to which it is (or isn’t) violated is a separate matter from the tone or character of a website. As soon as you allow comments at all, you’re encouraging the formation of some sort of community, and with that comes goals as to what the character of that community should be.

What this means is that even if something is not legally proscribed, you might nevertheless want to prevent it from being said. The Independent report makes much play of the right to dignity in the Constitution, but I’d rather not rest on that, because even if you think the Constitution has it wrong on things like hate speech and dignity, you could still justify restrictions on some speech on your news portal.

You could justify them simply via wanting to have a certain level of discourse on your platform, where you hold characteristic X to be non-conducive to that. X could be excessive sarcasm, or whatever – for example, I didn’t publish a comment the other day simply because it was overly pedantic and argumentative, and added no value to the conversation.

What you choose to restrict and why will be a policy matter for each media house to decide on for themselves, and I make the points above to encourage them to be guided by more than just the law when they deliberate on these matters.

The value of comments

The high-minded rhetoric around why we have comments online (free expression, debate etc.) – at least when it comes from the media houses themselves – is only part of the story, and to my mind a very small part of it.

The value that comments have for them is that eyeballs return to their pages, either to watch the slow-motion car crash of someone being schooled or trolled in comments, or to join in the fun themselves. Either way, you’re on my page rather than a competitors, and ad revenue might increase as a result.

And (we need data here) I’m intuitively completely disbelieving of the idea that there will be a significant difference in traffic if you switch from live, unmoderated commenting to some system that involves comments being posted after a delay of some sort. Of course a delay of days might have an impact, but I doubt that 12 hours or less would.

What to do?

The panel’s report recommends pre-publication moderation, where a) the commenters identity is known to the publisher, even if the comment appears anonymously; b) word-filters flag any potentially offensive comments (for containing words likely to correlate with abusive comments); and c) editorial oversight, where “trained and qualified” editors check the comments before publication.

Step (c) is onerous, and unduly so in not taking advantage of existing mechanisms for knowing who is likely to abuse comment sections and who not. But before I get to the disagreement, let me say where I agree.

Identity: I’m a big fan of people “owning” their opinions, and taking responsibility for them. To put it simply, the fear of reputational harm is one of the ways we are kept in check, and keep each other in check.

So I’m supportive of using the “letter to the editor” sort of model where possible – use your real names, which need to be verified in some fashion, unless there’s some compelling reason why you can’t (where the editor must decide on the merits of that reason, remembering, as I said above, that you have no right to comment).

Word-filters: You’ll perhaps get lots of false-positives here, so sifting through the stuff that’s flagged might involve more work than is necessary. But besides this potentially adding unnecessary overhead, I have no principled issue with it.

Editorial oversight: Making this the norm will be far too expensive and time-consuming (of course related issues, but manifesting as two separate problems). It would also be unnecessary, as we already have ways to crowdsource information regarding who can (in general) be trusted to not abuse comment sections.

For example, here on Synapses I use Disqus, as does IOL, Daily Maverick and the Mail&Guardian. I’ve set each post up with fully moderated comments, but I do have the option of specifying that any given commenter be automatically published without going into moderation.

Any of us who dip our toes into comment spaces online know the names of some regulars. Those regulars who are not abusive can be approved pre-publication. Yes, it will take some time and work to determine who is given this privilege, but in the long-run, it would save having to look at their comments each time.

Of course, you’d want some sort of policy for granting this privilege – say, for example, 5 non-abusive comments gives you that status, and the understanding is that it gets stripped from you once you abuse it.

The level of moderation required to afford people the privilege described above is rudimentary – interns, student journalists in university courses, bored college kids etc. could all do it for a nominal fee, and at the same time flag potentially abusive comments for the attention of a “real” editor.

All of the benefits listed in section 7.2 (page 33) of the report can be enjoyed once you have established this sort of “database” of approved commenters. If you wanted to be more liberal about it, and save even more time, someone with a high reputation score on Disqus can automatically be green-lighted.

One could even consider database of trustworthy commenters, shared across media houses that use the same commenting platform.

And as I said above, if someone sins, you simply delete them – here again, the community can be of assistance, in flagging things for an editor’s attention.

As submitted to Daily Maverick.

It’s sometimes difficult to escape the feeling that we’re living under the tyranny of the perpetually indignant. Taking the time to think things through and developing a measured response to some hot-button issue is a luxury we’re infrequently allowed. Not only do media outlets thrive on sensation, but readers are also often eager to be the first to express outrage at some new conspiracy, malfeasance or instance of ineptitude.

It’s sometimes difficult to escape the feeling that we’re living under the tyranny of the perpetually indignant. Taking the time to think things through and developing a measured response to some hot-button issue is a luxury we’re infrequently allowed. Not only do media outlets thrive on sensation, but readers are also often eager to be the first to express outrage at some new conspiracy, malfeasance or instance of ineptitude.

And so those hot-button issues can get generated out of thin air, then recycled and amplified in the echo-chamber of Twitter and other social media. Last week, Twitter itself became the latest subject of hysterical misinterpretation when they announced their new policies for blocking tweets. As of January 26, tweets (or Twitter accounts) can be blocked on a country-by-country basis rather than globally, as was the case before software refinements made selective blocking possible.

The Forbes’ headline “Twitter commits social suicide” summed up many of the responses, which made accusations of charges of censorship and complicity in killing free speech trend under the hashtag #TwitterBlackout. Some even suggested that the once-plucky underdog had now sold out, and was caving to the (purported) illiberal demands of their new investor, Saudi Prince Al-Waleed bin Talal.

But bin Talal only purchased a 3% stake in Twitter, and we have no evidence that he has any interest in dictating policy. We also have no evidence that Twitter’s policy change is a bad thing for free speech. In fact the opposite seems a far more plausible reading, which makes it more the shame that most of the indignant seem to not have bothered to read the policy itself.

It is not the case that Twitter will be monitoring your delight at having found your car keys (in the last place you looked!) or your #occupation of some patch of suburban scrubland. Any blocking (or censorship, for that is what it amounts to) will be reactive rather than proactive, where a party with legal grounds for requesting a takedown of tweets or an account lodges an application with Twitter to do so.

This has always been Twitter’s policy. For example, evidenced claims by film studios of copyright infringement have led to tweets being deleted. The difference between the old policy and the new is that, instead of those tweets being deleted globally, they will only be blocked in the country where that tweet violated the law. If you tweet some pro-Nazi sentiment in Germany (where doing so is illegal), Germans won’t be able to see the tweet but the rest of the world will.

In other words, more people can now see the tweet than was the case before. And if you’re planning a revolution on Twitter, you could always tell your fellow Bolsheviks to simply follow Twitter’s own instructions for changing your country settings to “worldwide”, thereby allowing you to see any tweets, no matter how repressive your situation might be.

What’s more, users in countries where tweets have been blocked will be able to see that something or someone has been blocked. And here Twitter has again done its best to increase rather than decrease transparency, by committing to posting the details of who requested the censorship at Chilling Effects. The “Streisand effect” shows us how exposing attempts at censorship will tend to increase the dissemination of the undesirable material – here made easy not only by changing your Twitter settings, but also by the fact that the same undesirable material, if originating outside the censoring country, will not be blocked by Twitter.

In short, then, Twitter has done nothing to increase the likelihood or frequency of censorship, but instead attempted to obey the laws pertaining in certain jurisdictions without affecting information flow in others. It’s a positive move, and is being conducted in a fully transparent and defensible way. On balance, there’s good reason to suppose it could result in increased protection of free speech.

But for the #TwitterBlackout crowd, evidence takes a back-seat to indignation. Some indignation is of course justified – it shouldn’t be the case that governments attempt to censor speech (arguably, outside of some narrowly-defined cases). That they do so is not Twitter’s fault, and there is nothing that Twitter can do about it. Taking a stand against censorship by refusing to obey local laws would simply result in the complete unavailability of the service, as is the case in China.

Us advocates of free speech, and those campaigning for other causes, can forget that our idealised version of the world collides with the real worlds of politics and pragmatism. It’s not Twitter’s job to share your or my ideological commitments, and to run the risk of being shut down in more places than only China. Here, it’s governments that are censoring, and Twitter is doing is best to minimise the effects of that censorship while spreading its global reach for the sake of profit. That’s their job.

Those of you on Facebook can enjoy a few minutes of entertainment at the expense of some frothing at the mouth fundumbentalists, who are incensed at Woolworths’ decision to pull some Christian magazines from their shelves. The very Christian homophobe Errol Naidoo was quick out of the starting blocks, sending out a newsletter headlined “Christianity Not Welcomed At Woolies!” while the story was breaking on News24 and TimesLive.

Naidoo is apparently suffering from some memory loss to accompany his dementia.

As submitted to The Daily Maverick

Free speech is not the only value that democratic societies subscribe to. Nor does, or should, our commitment to free speech always have to trump competing values such as national security or personal dignity. But the principle of free speech nevertheless stands in need of exceptional, and exceptionally strong, counterarguments in cases where we are told that it is not permissible to broadcast or publish any particular point of view.

Free speech is not the only value that democratic societies subscribe to. Nor does, or should, our commitment to free speech always have to trump competing values such as national security or personal dignity. But the principle of free speech nevertheless stands in need of exceptional, and exceptionally strong, counterarguments in cases where we are told that it is not permissible to broadcast or publish any particular point of view.

This commitment to an open marketplace of ideas rests on the belief that each person should have access to the points of view in circulation, so that he or she is able to exercise their right to moral independence by considering the ideas themselves. As Mill reminds us, compromising free speech costs us both the opportunity to hear things that are true, which can help to correct errors; and also to hear things that are false, where the truth is strengthened by “its collision with error”.

Here’s a sense of what awaits:

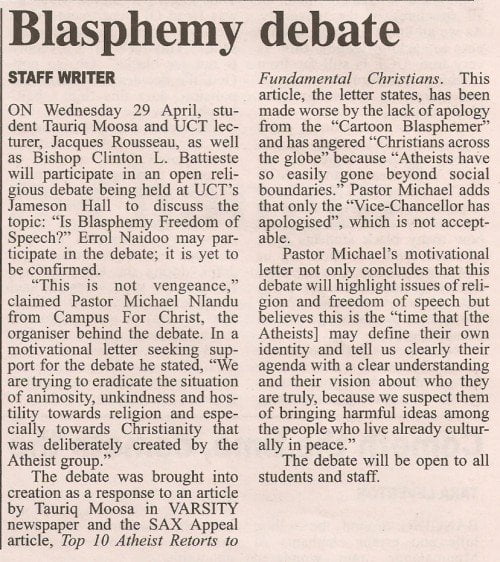

Part of me wonders whether I shouldn’t spend the bulk of my allotted time simply explaining the mistakes in the Michael Nlandu sentences, as quoted above. I’ll only have 15 minutes, after all. But no, as he says: “this is not vengeance”, so I’ll focus on the problems associated with burying one’s head in the sand more generally, rather than picking on poor Pastor Michael.

Note: While a few paragraphs towards the end of this are verbatim repeats (or slight edits) of content from a previous post, I considered the repetition justifiable as this post attempts to make a broader point, using the same example.

One way to divide nature – at least human nature – at its joints is to observe that the ordinary person’s approach to epistemology is that of either naturalism or supernaturalism.

Naturalism, in broad summary, holds that epistemology is closely connected to natural science. There is an increasing tendency amongst naturalists to hold that social sciences which do not verify their findings through results in the natural sciences are at best placeholders for an eventual, more mature, position which does incorporate the findings of the natural sciences, or, at worst, are epistemologically useless.

Cognitive science, as well as more general research in the fields of decision-science and heuristics of decision-making, allows us to understand far more about what people believe, and why, than we could previously understand. Despite this, much activity in social science proceeds as if these scientific revolutions are not occurring around them, and that that we are still somehow adding value by theorising about culture, literature or individual psychology.

Mybroadband.co.za – one of the largest online South African communities – seem to be following the terrible precedent set by some of the responses to Sax Appeal, and will henceforth not allow religious discussion on their forums. The decision to do so is reported to follow “getting many complaints of intolerance, blasphemy and the like”. This serves as another example of hypersensitivity winning the day, and of special treatment being afforded to the complaints of the religious. There is cause to doubt that this censoring move is premised on a desire to simply eliminate controversy on the forums in question, as that would require limiting discussion of topics that offend people like me: intelligent design, moral argument based on metaphysical premises, or philosophically illiterate discussion of materialism, to mention but a few threads that can also be found on those forums. We’ll wait to see whether topics such as those are moderated or banned. If so, I have no complaints – there are more than enough places that do allow robust debate on matters metaphysical.